Lecture 8-2: Convex Optimization: Part 2#

Download the original slides: CMSE382-Lec8_2.pdf

Warning

This is an AI-generated transcript of the lecture slides and may contain errors or inaccuracies. Please refer to the original course materials for authoritative content.

This Lecture#

Topics:

The orthogonal projection operator

Projection on the non-negative orthant

Projection on \(B[0,r]\)

Announcements:

Homework 4 due Friday

Orthogonal Projection Operator#

Orthogonal projection#

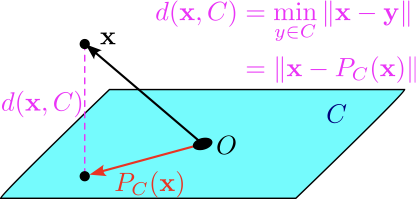

Definition (Orthogonal projection operator)

Given a nonempty closed convex set \(C\), the orthogonal projection operator \(P_C:\mathbb{R}^n \to C\) is defined by

Returns the vector in \(C\) that is closest to input vector \(\mathbf{x}\).

Is a convex optimization problem:

Orthogonal projection: First projection theorem#

Theorem (first projection theorem)

Let \(C\) be a nonempty closed convex set. Then the problem \(P_C(\mathbf{x})=\text{argmin}{\|\mathbf{y} - \mathbf{x}\|^2: \mathbf{y} \in C}\) has a unique optimal solution.

Computing \(P_C(\mathbf{x})\) can be difficult. Examples where it is easy to compute:

Projection on non-negative orthant.

Projection onto balls.

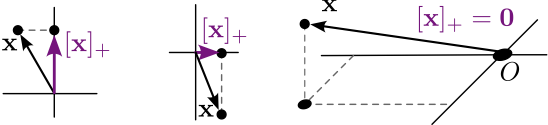

Non-negative part of a vector#

Definition (Non-negative part of a vector)

For \(\alpha \in \mathbb{R}\), the non-negative part of \(\alpha\) is \([\alpha]_+ = \begin{cases}\alpha, & \alpha \geq 0\\ 0, & \alpha<0. \end{cases}\)

For a vector \(\mathbf{v} \in \mathbb{R}^n\), the non-negative part of \(\mathbf{v}\) is \([\mathbf{v}]_+ = \begin{bmatrix} [v_1]_+ \\ [v_2]_+ \\ \vdots \\ [v_n]_+ \end{bmatrix}\)

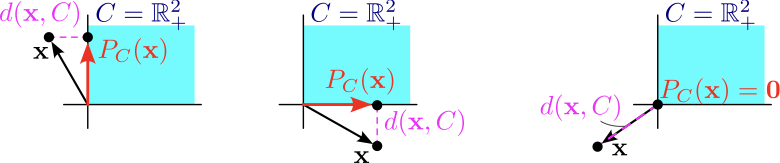

Orthogonal projection: Projection on the non-negative orthant#

Let \(C=\mathbb{R}^n_{+}\). To find the orthogonal projection of \(\mathbf{x} \in \mathbb{R}^n\) onto \(\mathbb{R}^n_{+}\):

\(\underline{P_C(\mathbf{x})}\)

\(\underline{\text{Equivalently,}}\)

\(\underline{\text{Separable}}\)

Definition (Separable convex optimization problems)

A convex optimization problem is called separable if the objective function and the constraints can be decomposed into components that each depend on one control/decision variable:

Objective function: \(f(\mathbf{x})=\sum{f_i(\mathbf{x}_i)}\).

Constraint(s): \(g(\mathbf{x})=\sum{g_i(\mathbf{x}_i)}\), or \(\{g_i(\mathbf{x}_i)\}_{i}\)

Orthogonal projection: Projection on the non-negative orthant#

Let \(C=\mathbb{R}^n_{+}\). The orthogonal projection of \(\mathbf{x} \in \mathbb{R}^n\) onto \(\mathbb{R}^n_{+} = \{\mathbf{y} \in \R^n \mid y_i \geq 0 \; \forall i\}\) is

Orthogonal projection onto \(\mathbb{R}^n_{+}\)

The orthogonal projection operator onto \(\mathbb{R}^n_{+}\) is

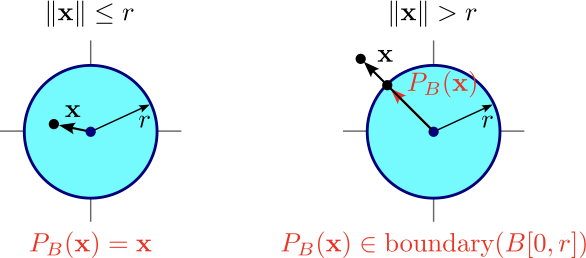

Orthogonal projection: Projection onto balls#

Let \(C=B[\mathbf{0},r]=\{\mathbf{y}:\|\mathbf{y}\leq r\}\). The projection of \(\mathbf{x}\) onto \(C\) is