Lecture 11-1: The KKT Conditions#

Download the original slides: CMSE382-Lec11_1.pdf

Warning

This is an AI-generated transcript of the lecture slides and may contain errors or inaccuracies. Please refer to the original course materials for authoritative content.

This Lecture#

Topics:

Feasible descent direction

Inequality and equality constrained problems

Example: Equality constrained

Example KKT not satisfied

Announcements:

None

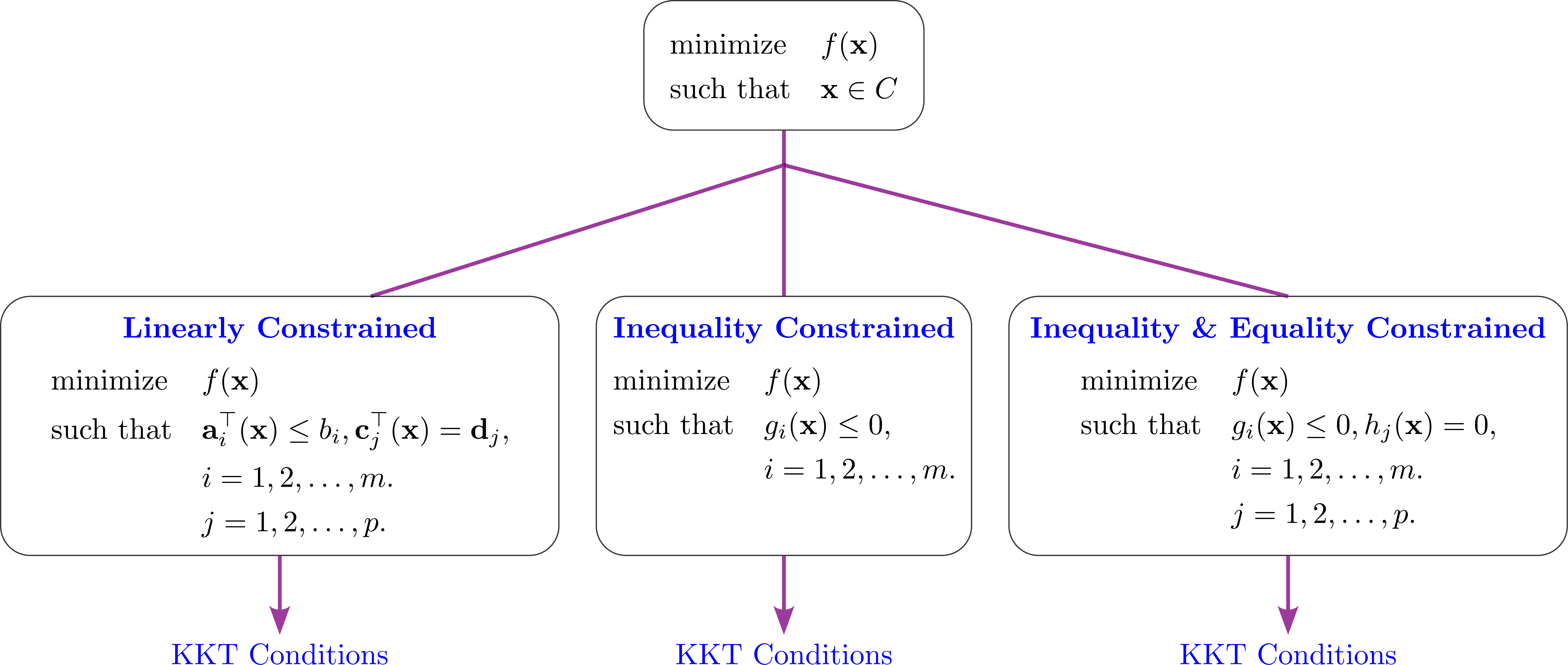

Inequality and equality constrained problems#

Outlook#

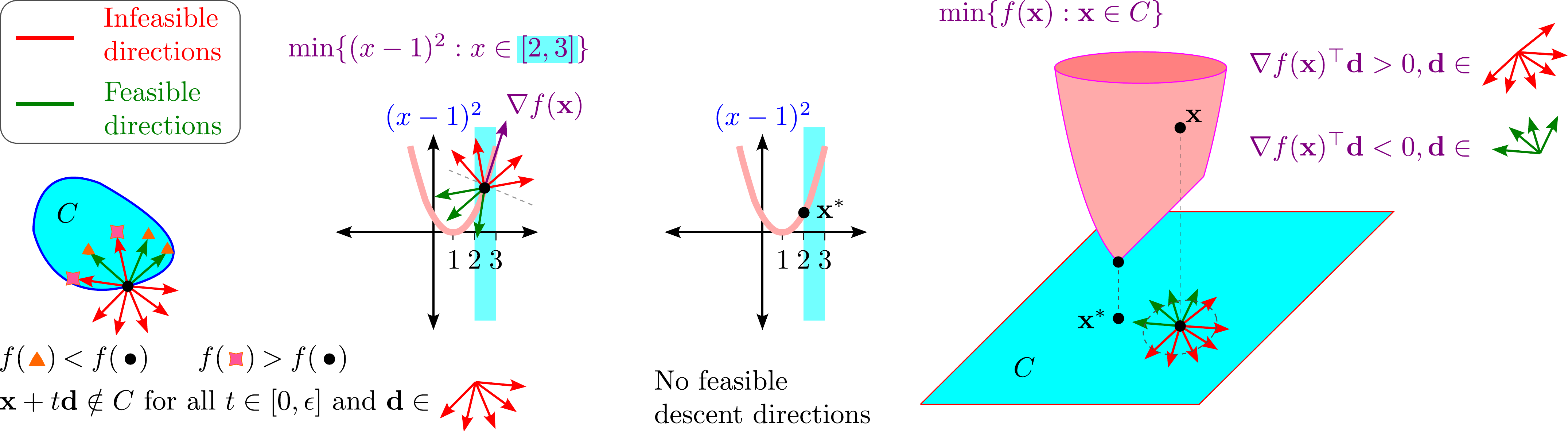

Idea: Feasible descent direction#

Feasible descent direction#

Theorem (Feasible descent direction)

Consider the optimization problem

where \(f\) is continuously differentiable function over the set \(C \subseteq \mathbb{R}^n\).

Then a vector \(\mathbf{d} \neq 0\) is called a feasible descent direction at \(\mathbf{x} \in C\) if

\(\nabla f(\mathbf{x})^{\top} \mathbf{d} < 0\), and

there exists \(\e > 0\) such that \(\mathbf{x} + t \mathbf{d} \in C\) for all \(t \in [0,\e]\).

Lemma

If \(\mathbf{x}^*\) is a local optimal solution, then there are no feasible descent directions at \(\mathbf{x}^*\).

Idea: there is no direction you can move that will both decrease the function’s value and stay within the problem’s constraints.

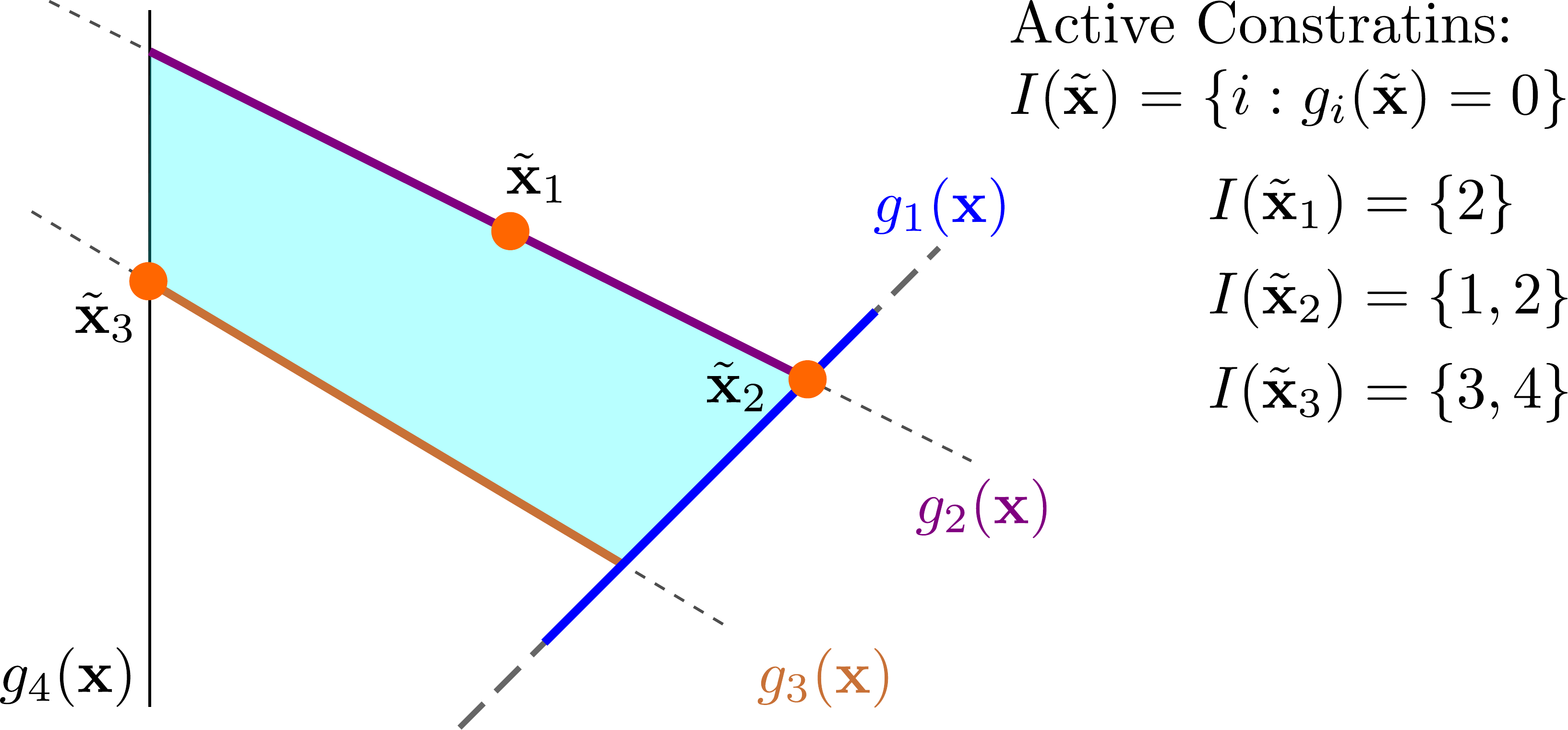

Recall: Active constraints#

Definition (Recall: Active constraints)

Given a set of inequalities

where \(g_i:\mathbb{R}^n \to \mathbb{R}\) are functions, and a vector \(\tilde{\mathbf{x}} \in \mathbb{R}^n\), the active constraints at \(\tilde{\mathbf{x}}\) are the constraints satisfied as equalities at \(\tilde{\mathbf{x}}\). The set of active constraints is denoted by

Regular Points#

Consider the minimization problem

where \(f,g_1,\ldots,g_m,h_1,h_2,\ldots,h_p\) are continuously differentiable functions over \(\mathbb{R}^n\).

Definition

A feasible point \(\mathbf{x}^*\) is called regular if the gradients of the active constraints among the inequality constraints and of the equality constraints

are linearly independent.

Feasible points that are not regular are called irregular points.

Linear Independence#

Definition

A collection of vectors

is linearly independent if the only solution to the equation

is \(\alpha_1 = \alpha_2 = \ldots = \alpha_k = 0\).

Some methods for checking:

Check using the definition directly.

If there is one vector, this is linearly independent if it is nonzero.

If there are two vectors, they are linearly independent if they are not scalar multiples of each other.

Put the \(k\) vectors as columns in a matrix \(A\).

If \(k\leq n\) and \(\mathrm{rank}(A) = k\), then the vectors are linearly independent.

If \(k=n\), then the vectors are linearly independent if \(\det(A) \neq 0\).

If \(k>n\), then the vectors are linearly dependent.

KKT Points#

Consider the minimization problem

where \(f,g_1,\ldots,g_m,h_1,h_2,\ldots,h_p\) are continuously differentiable functions over \(\mathbb{R}^n\).

Definition

A feasible point \(\mathbf{x}^*\) is called a KKT point if there exist \(\lambda_1,\lambda_2,\ldots,\lambda_m \geq 0\) and \(\mu_1,\mu_2,\ldots,\mu_p \in \mathbb{R}\) such that

KKT conditions for Inequality and equality constrained problems#

Theorem (Inequality and equality constrained problems)

Let \(\mathbf{x}^*\) be a local minimum of the problem

where \(f,g_1,\ldots,g_m,h_1,h_2,\ldots,h_p\) are continuously differentiable functions over \(\mathbb{R}^n\). Suppose that \(\mathbf{x}^*\) is regular, then \(\mathbf{x}^*\) is a KKT point.

A necessary condition for local optimality of a regular point is that it is a KKT point.

Regularity is not required in the linearly constrained case.