Lecture 7-1: Convex Functions: Part 1#

Download the original slides: CMSE382-Lec7_1.pdf

Warning

This is an AI-generated transcript of the lecture slides and may contain errors or inaccuracies. Please refer to the original course materials for authoritative content.

Definition of Convex Function#

Topics Covered#

Topics:

Definition (vid)

First order characterization (vid)

Second order characterization (vid)

Operations preserving convexity

Scaling, Summation, and Affine transformation (vid)

Composition (vid)

Point-wise maximum (vid)

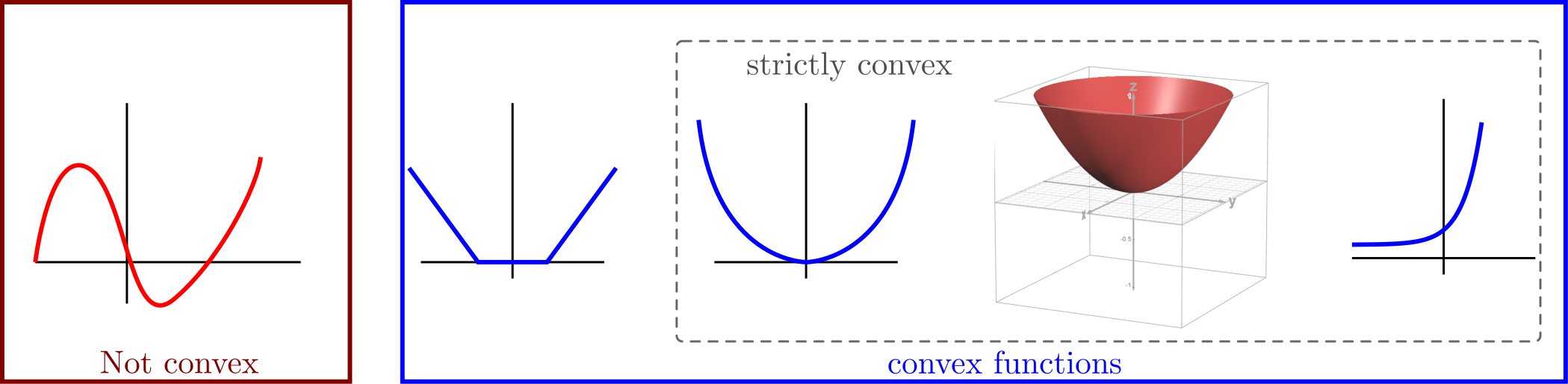

Definition of Convex Functions#

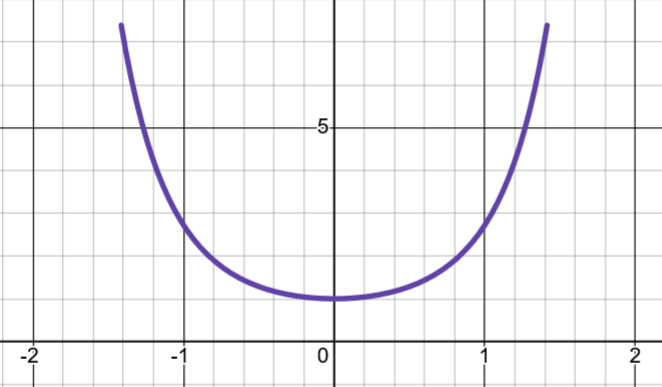

Definition: A function \(f: C \to \mathbb{R}\) defined on a convex set \(C \subset \mathbb{R}^n\) is

convex if and only if

strictly convex if and only if

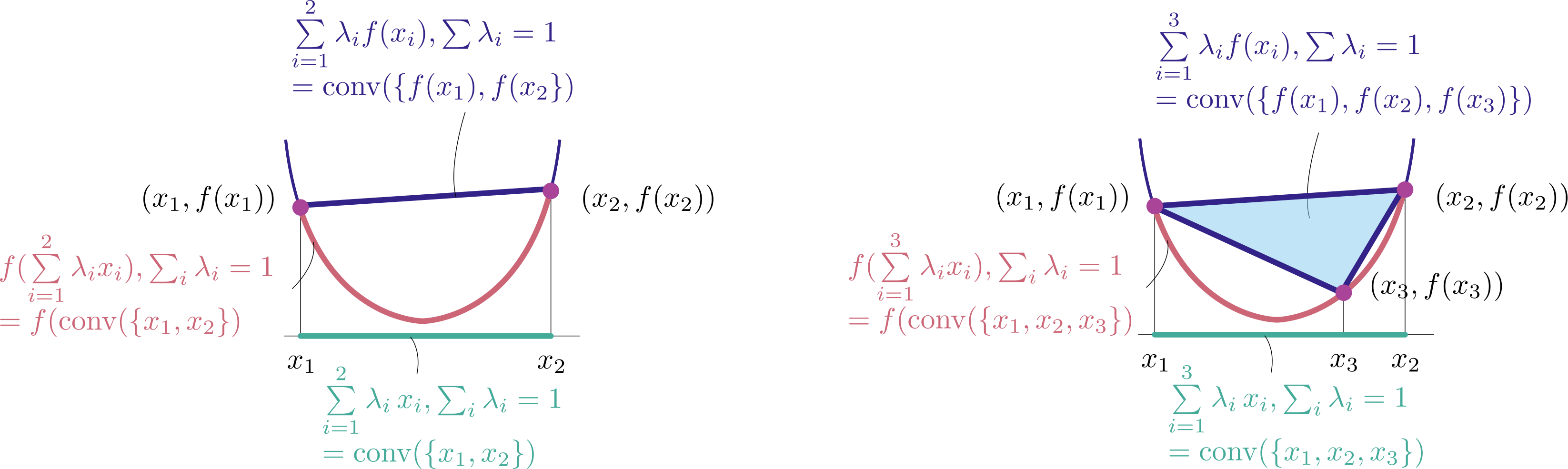

Jensen Inequality#

Theorem: Let \(f: C \to \mathbb{R}\) be a convex function. Then we have

if \(\lambda \in \Delta_m\) and \(\mathbf{x}_1, \mathbf{x}_2, \ldots, \mathbf{x}_m \in C\).

\(\lambda = (\lambda_1, \ldots, \lambda_m) \in \Delta_m\) means \(\lambda_i \geq 0\) for all \(i\) and \(\sum_{i=1}^m \lambda_i = 1\).

Example: Affine Functions Are Convex#

Example: Is the function (called affine function) \(f(\mathbf{x})=\mathbf{a}^{\top} \mathbf{x} + b\), where \(\mathbf{a} \in \mathbb{R}^n\) and \(b\in \mathbb{R}\), convex?

Answer:

Recall: \(f\) is convex if and only if \(f(\lambda \mathbf{x} + (1 - \lambda)\mathbf{y}) \le \lambda f(\mathbf{x}) + (1 - \lambda)f(\mathbf{y})\) for all \(\mathbf{x}, \mathbf{y} \in C\), \(\lambda \in [0, 1]\).

So an affine function is convex.

First Order Characterization#

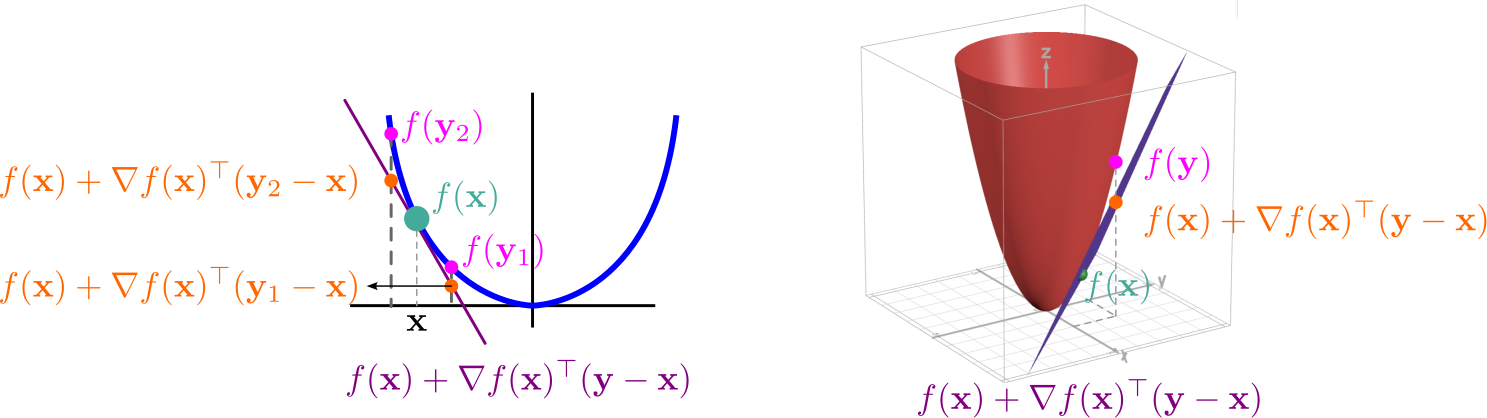

The Gradient Inequality#

Theorem: If \(f:C \to \mathbb{R}\) is continuously differentiable then:

\(f\) is convex if and only if \(f(\mathbf{y}) \geq f(\mathbf{x}) + \nabla f(\mathbf{x})^{\top} (\mathbf{y}-\mathbf{x})\), \(\forall \mathbf{x},\mathbf{y} \in C\).

\(f\) is strictly convex if and only if \(f(\mathbf{y}) > f(\mathbf{x}) + \nabla f(\mathbf{x})^{\top} (\mathbf{y}-\mathbf{x})\), \(\forall \mathbf{x}\neq \mathbf{y} \in C\).

The tangent hyperplanes of convex functions are always underestimates of the function.

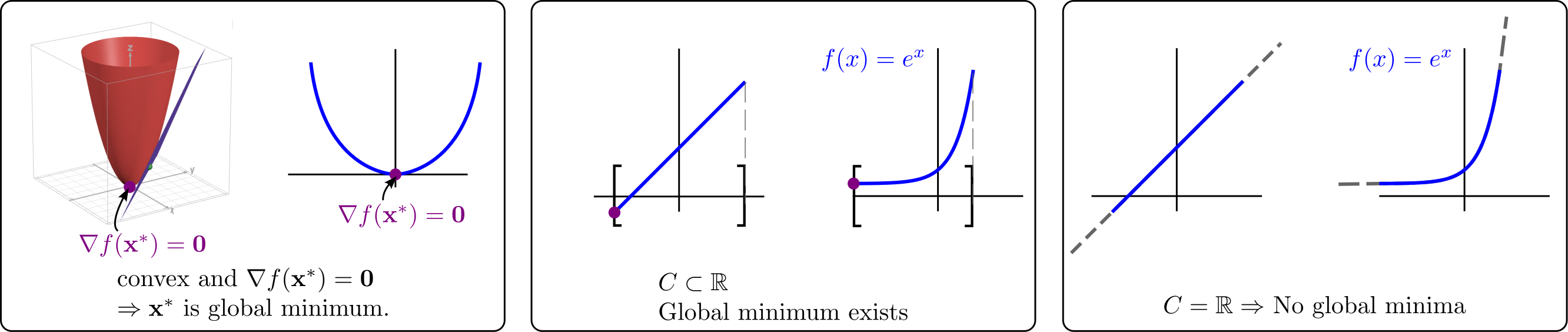

Stationarity Under Convexity#

Theorem: Given a continuously differentiable convex function \(f: C \to \mathbb{R}\) over convex set \(C \subseteq \mathbb{R}^n\).

If \(\nabla f(\mathbf{x}^*) = 0\) for some \(\mathbf{x}^*\), then \(\mathbf{x}^*\) is a global minimizer.

If \(C= \mathbb{R}^n\), then \(\nabla f(\mathbf{x}^*) = 0\) if and only if \(\mathbf{x}^*\) is a global minimum of \(f\) over \(\mathbb{R}^n\).

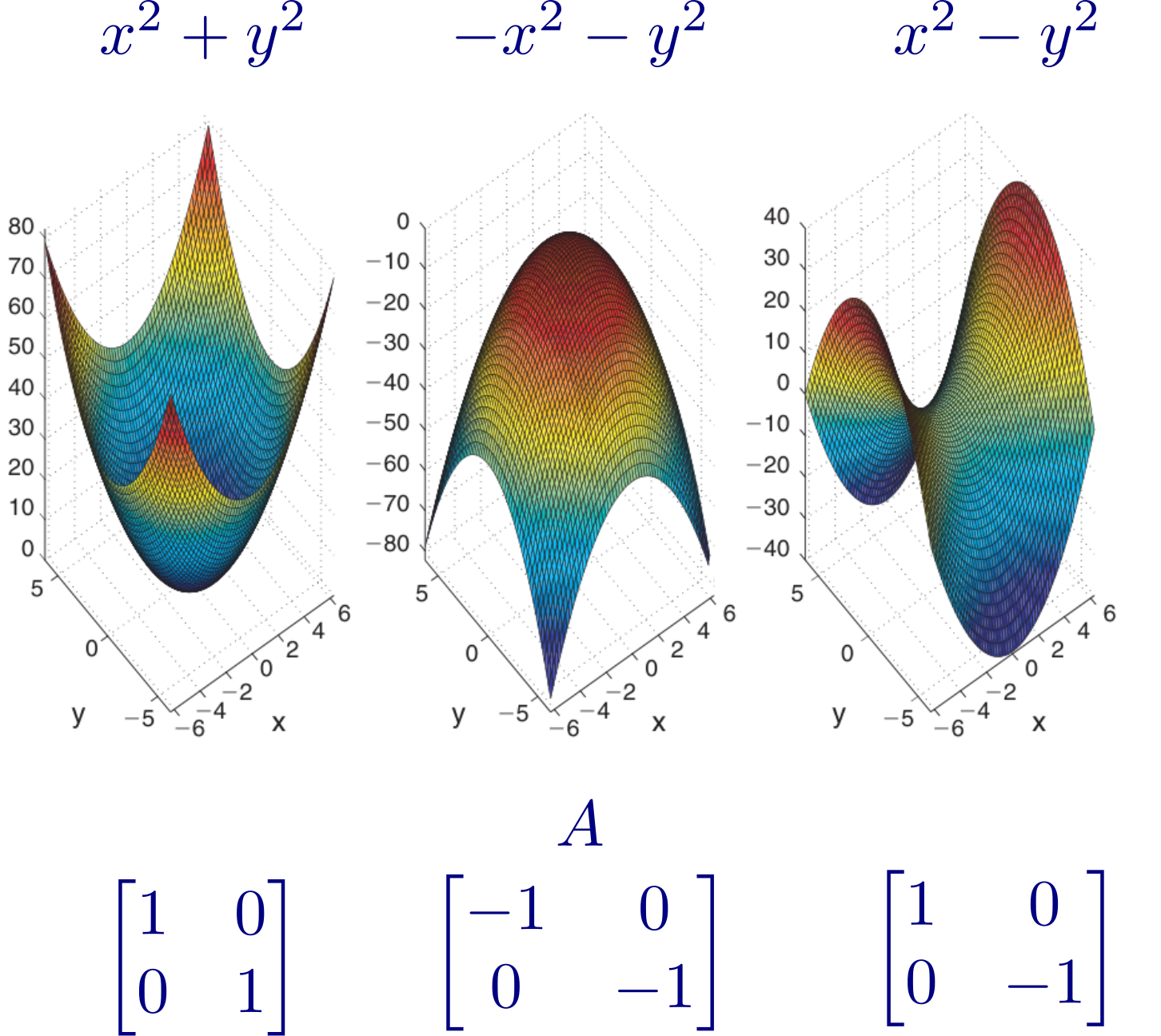

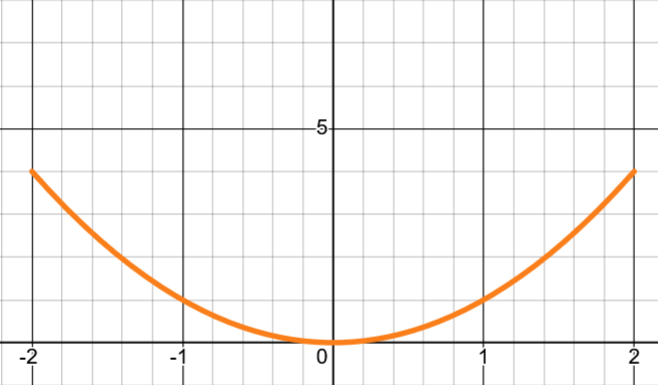

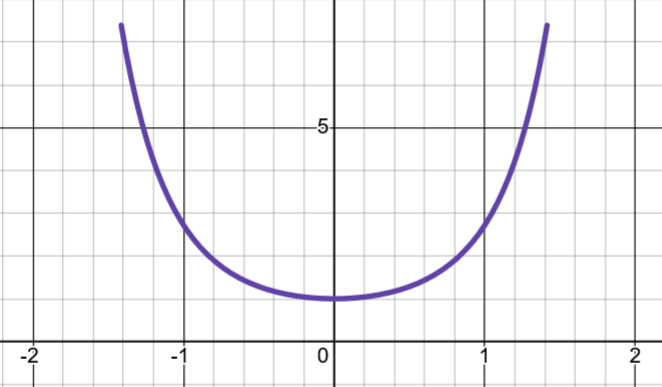

Convexity of Quadratic Functions#

Theorem: Let \(f : \mathbb{R}^n \to \mathbb{R}\) be the quadratic function given by \(f (\mathbf{x}) = \mathbf{x}^T A\mathbf{x} + 2b^T \mathbf{x} + c\), where \(A \in \mathbb{R}^{n \times n}\) is symmetric, \(b \in \mathbb{R}^n\), and \(c \in \mathbb{R}\). Then:

\(f\) is convex if and only if \(A \succeq 0\).

\(f\) is strictly convex if and only if \(A \succ 0\).

Monotonicity of the Gradient#

Theorem: If \(f : C \to \mathbb{R}\) is continuously differentiable over the convex set \(C \subseteq \mathbb{R}^n\). Then \(f\) is convex over \(C\) if and only if

Second Order Characterization#

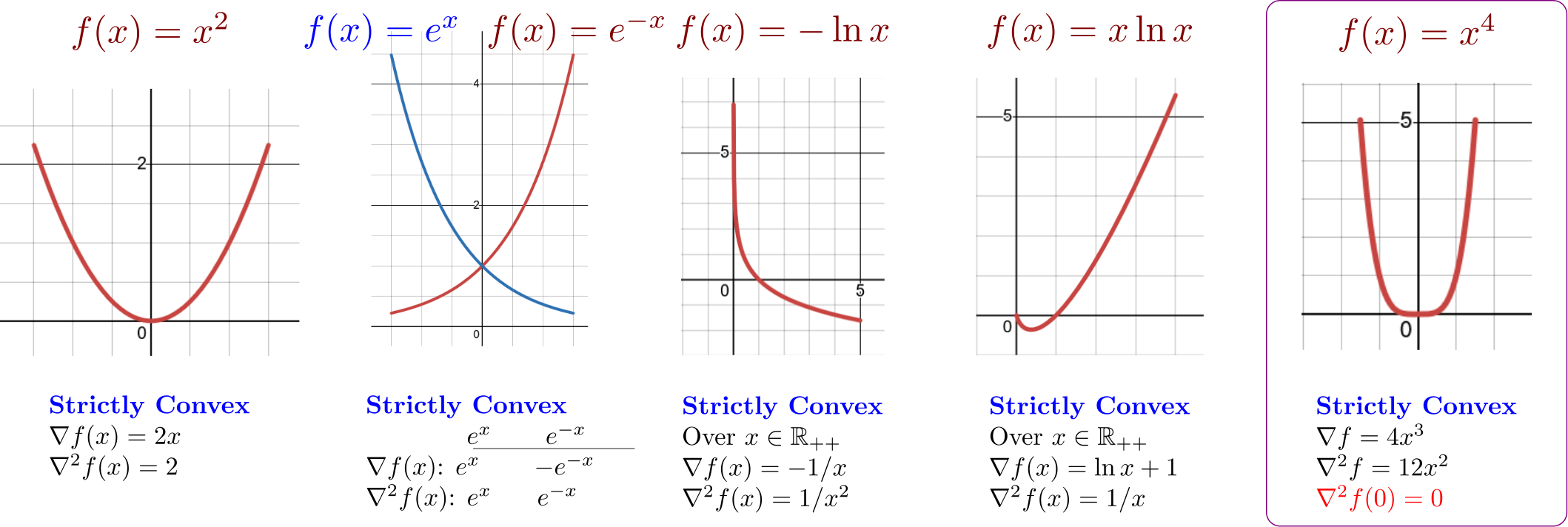

Second Order Characterization#

Theorem: Let \(f\) be a twice continuously differentiable function over an open convex set \(C \subseteq \mathbb{R}^n\).

\(f\) is convex over \(C\) if and only if \(\nabla^2 f(\mathbf{x}) \succeq 0\) for any \(\mathbf{x} \in C\).

If \(\nabla^2 f(\mathbf{x}) \succ 0\) for any \(\mathbf{x} \in C\), then \(f\) is strictly convex over \(C\).

Operations Preserving Convexity#

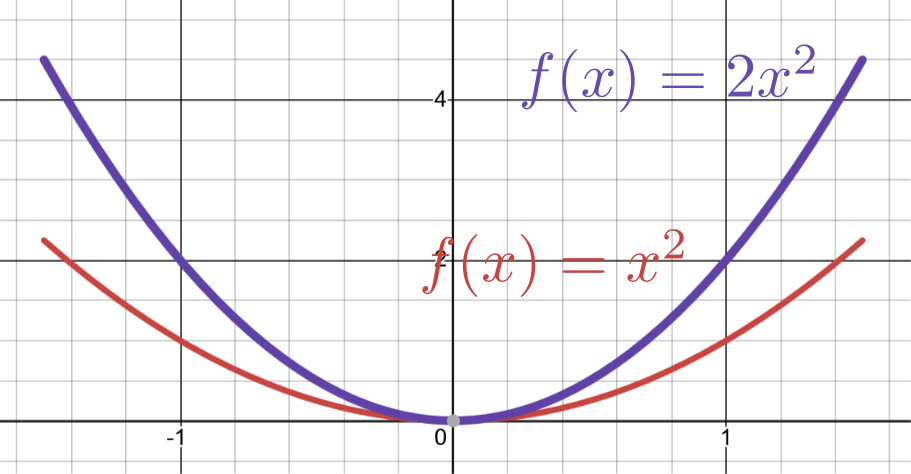

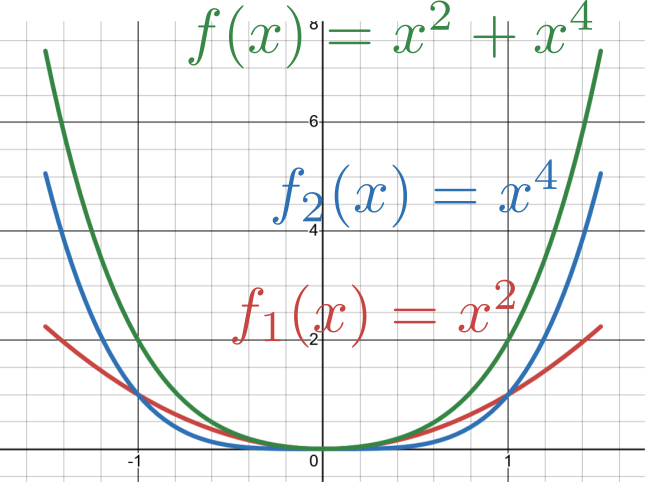

Convexity Under Summation and Non-Negative Scaling#

Theorem:

Let \(f\) be a convex function defined over a convex set \(C \subseteq \mathbb{R}^n\) and let \(\alpha \geq 0\). Then \(\alpha f\) is a convex function over \(C\).

Let \(f_1, f_2, \ldots, f_p\) be convex functions over a convex set \(C \subseteq \mathbb{R}^n\). Then the sum function \(f_1 + f_2 + \cdots + f_p\) is convex over \(C\).

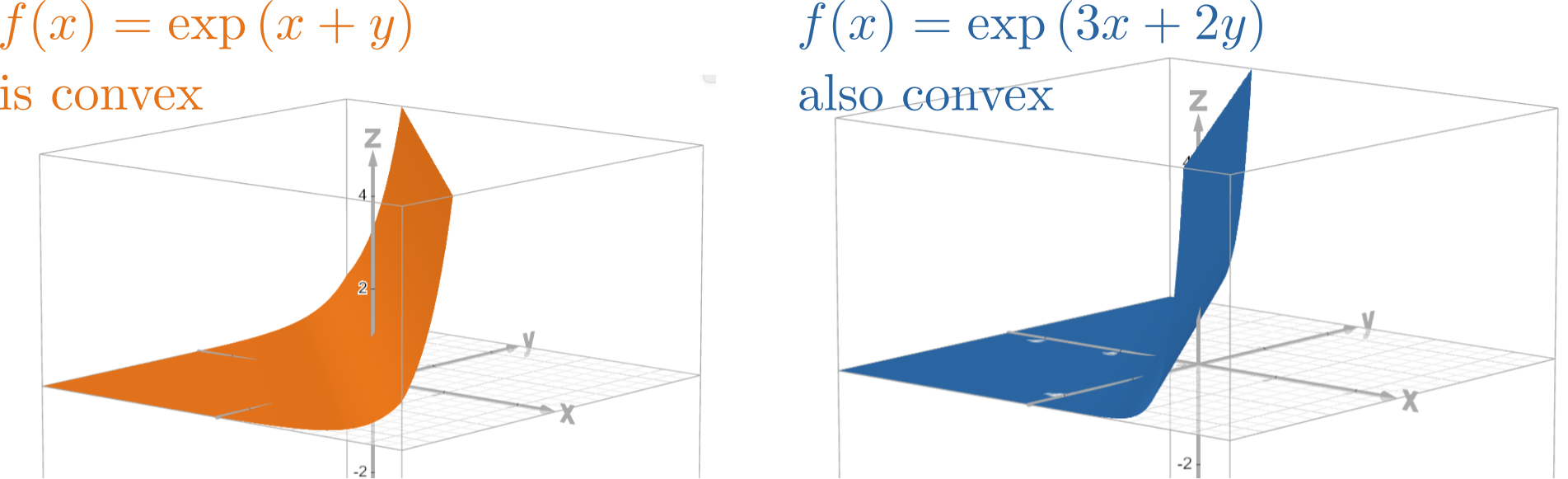

Convexity Under Affine Change of Variables#

Theorem: Let \(f: C \to \mathbb{R}\) be a convex function defined on a convex set \(C \subseteq \mathbb{R}^n\). Let \(A \in \mathbb{R}^{n \times m}\) and \(\mathbf{b} \in \mathbb{R}^n\). Then the function \(g\) defined by

is convex over the convex set \(D = \{\mathbf{y} \in \mathbb{R}^m : A\mathbf{y} + \mathbf{b}\in C\}\).

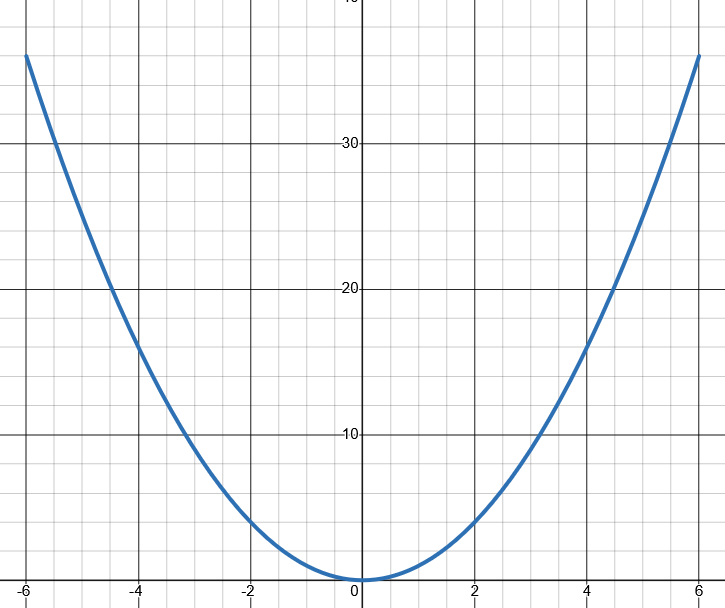

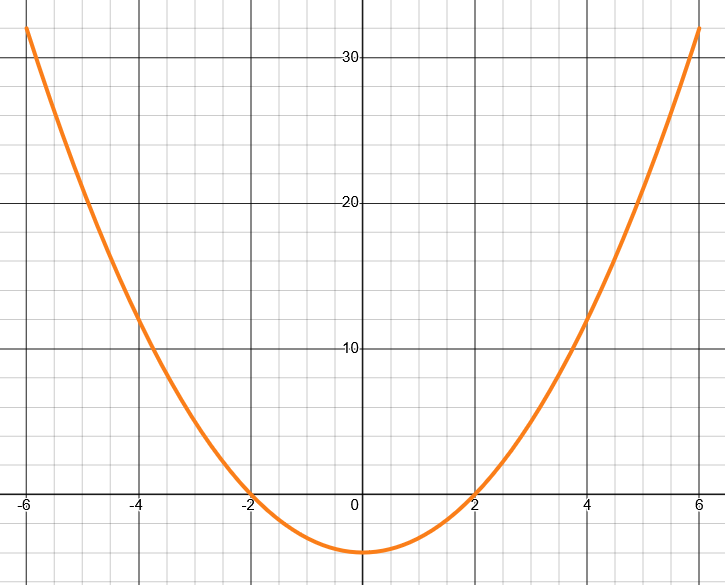

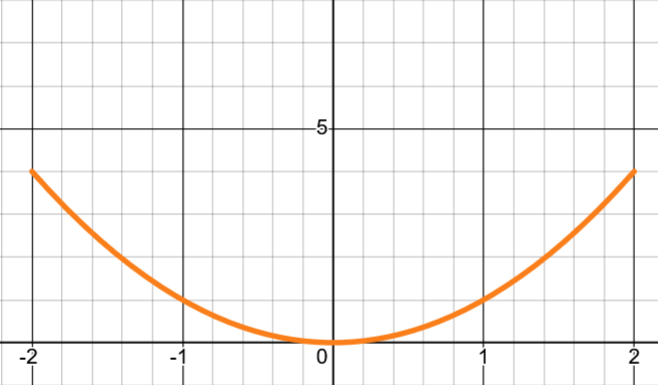

Convexity Under Composition#

Is convexity preserved under composition? Not always!

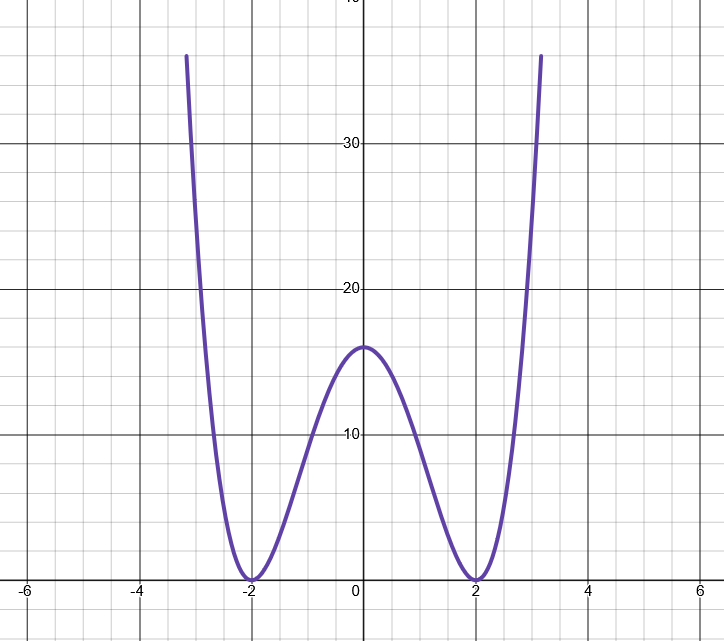

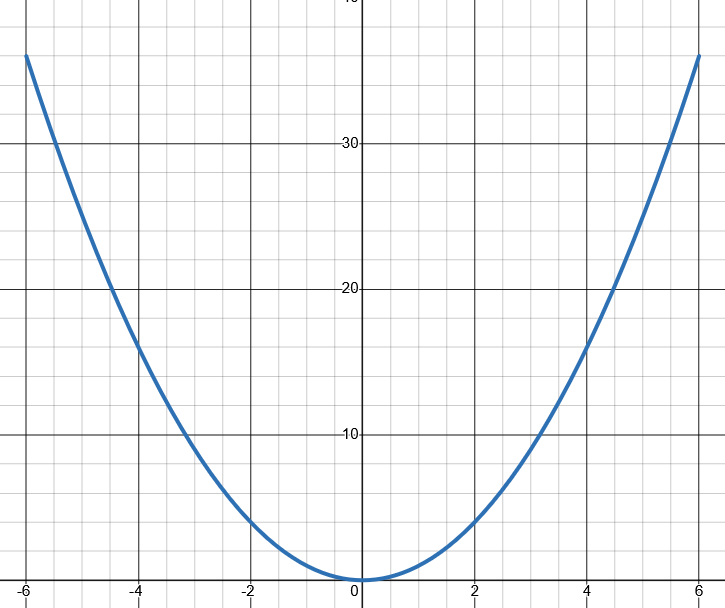

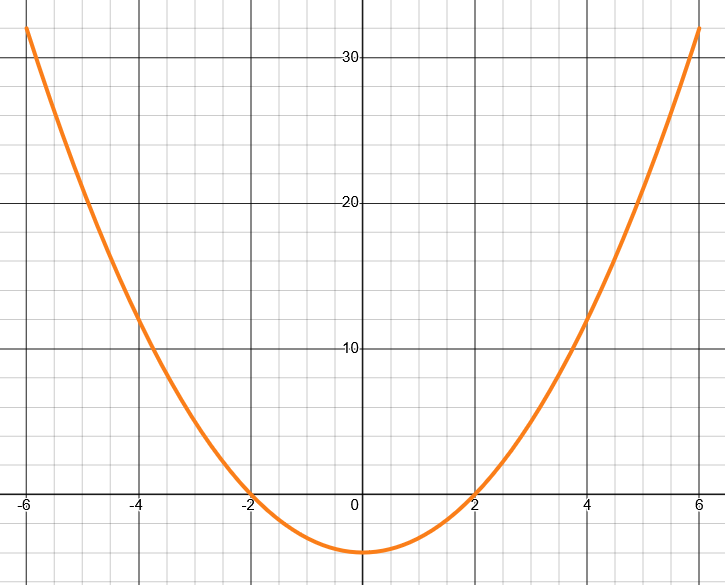

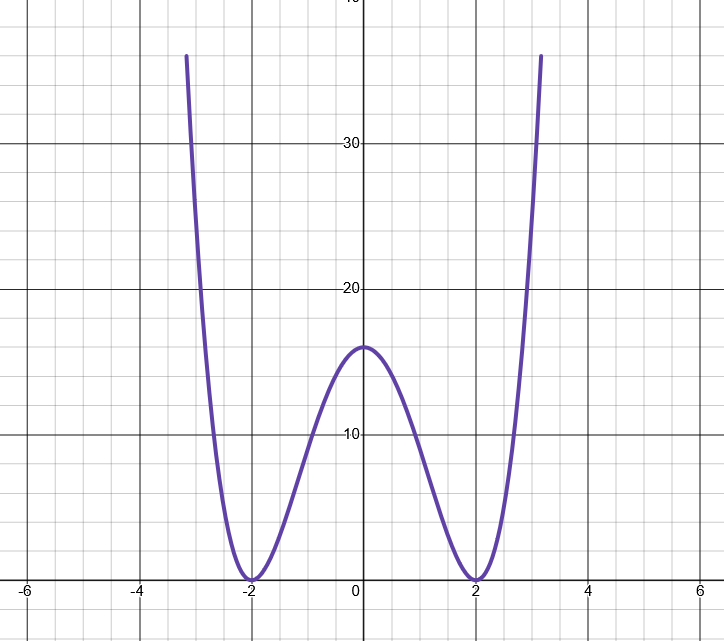

Example 1: \(g(x)=x^2\) (convex), \(h(x)=x^2-4\) (convex), but \(g(h(x))=(x^2-4)^2\) is NOT convex.

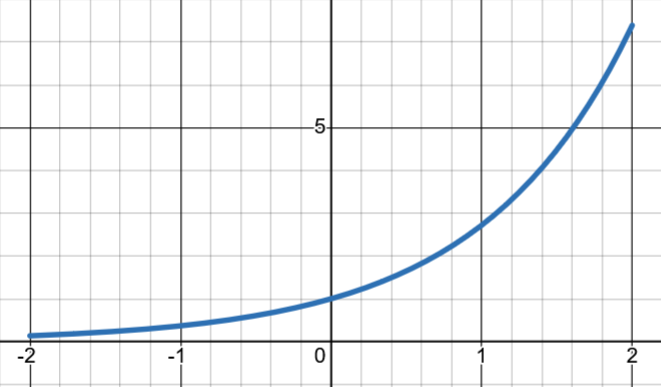

Example 2: \(g(x)=\exp(x)\) (convex), \(h(x)=x^2\) (convex), and \(g(h(x))=(\exp(x))^2\) is Convex.

Monotonic Functions#

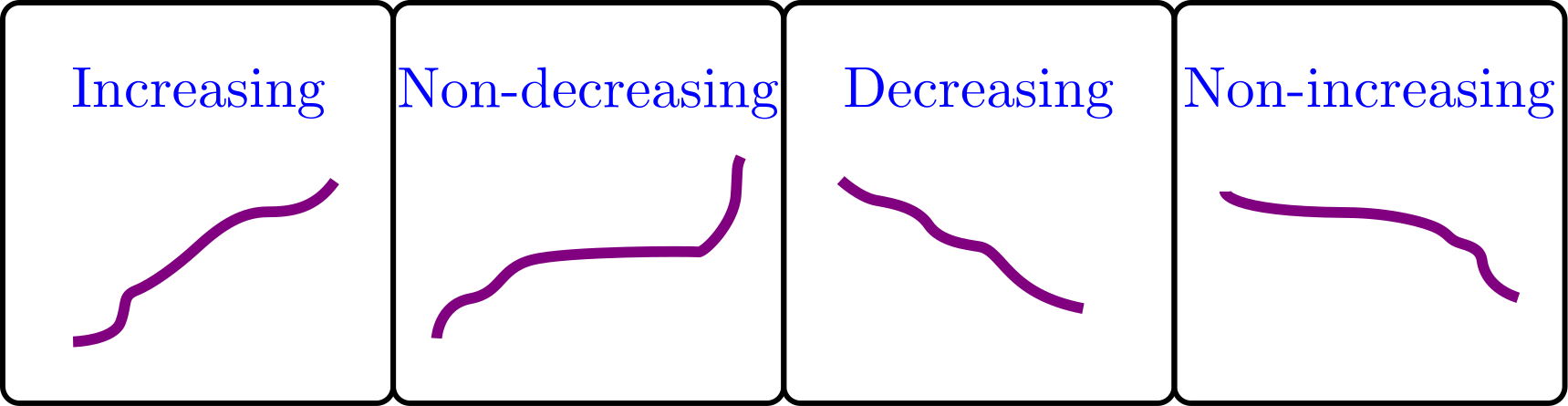

Definition: A function \(f:I\to \mathbb{R}\) where \(I\subseteq \mathbb{R}\) is called:

Increasing if for \(x < y\) we have \(f(x) < f(y)\) for all \(x,y \in I\).

Non-decreasing if for \(x < y\) we have \(f(x) \leq f(y)\) for all \(x,y \in I\).

Decreasing if for \(x < y\) we have \(f(x) > f(y)\) for all \(x,y \in I\).

Non-increasing if for \(x < y\) we have \(f(x) \geq f(y)\) for all \(x,y \in I\).

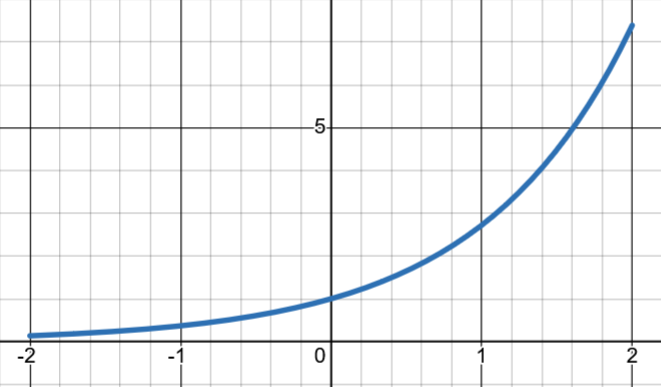

Convexity Under Composition With a Non-Decreasing Convex Function#

Theorem: Let \(f: C \to \mathbb{R}\) be a convex function over the convex set \(C \subseteq \mathbb{R}^n\). Let \(g: I \to \mathbb{R}\) be a one-dimensional nondecreasing convex function over the interval \(I \subseteq \mathbb{R}\). Assume that \(f(C) \subseteq I\). Then \(g(f(\mathbf{x}))\), \(\mathbf{x} \in C\), is a convex function over \(C\).

Example (convex): \(g(x)=\exp(x)\), \(h(x)=x^2\), \(g(h(x))=(\exp(x))^2\)

Example (NOT convex): \(g(x)=x^2\), \(h(x)=x^2-4\), \(g(h(x))=(x^2-4)^2\)

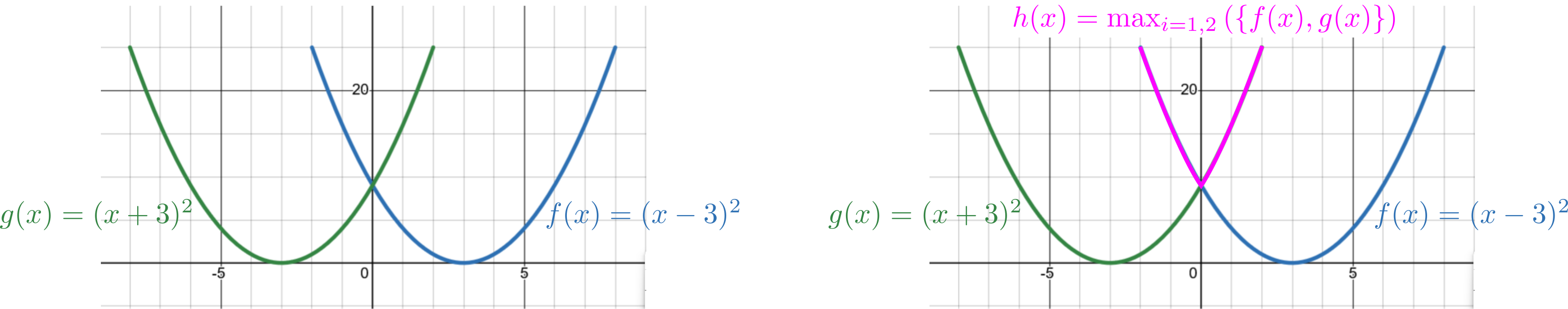

Pointwise Maximum Preserves Convexity#

Theorem: Let \(f_{1},\ldots,f_{p}:C\rightarrow \mathbb{R}\) be \(p\) convex functions over the convex set \(C\subseteq \mathbb{R}^{n}\). Then the maximum function \(f(\mathbf{x})\equiv \max_{i=1,\dots,p}f_{i}(\mathbf{x})\) is a convex function over \(C\).