In-Class Assignment: Machine Learning; classification with logistic regression#

Day 15#

CMSE 202#

Agenda for today’s class#

✅ Put your name here

#✅ Put your group member names here

#Imports for the day#

import pandas as pd

import matplotlib.pyplot as plt

import numpy as np

from sklearn.model_selection import train_test_split

import statsmodels.api as sm

from sklearn import metrics

1. Training vs Testing#

As you learned in the pre-class, classification is an ML process that maps features of an input data set to class labels. Classification is a supervised learning approach where example data is used to train the data. We typically divide the data used to train and evaluate the classifier (the result model) into three sets

training set

testing set

validation set

✅ Do This: As a group, discuss what these three sets represent. It might help to review these terms on the web. Put your answers down below:

✎ Training set is:

✎ Testing set is:

✎ Validation set is:

Defining the features and building the model#

If you review the image at the top of the notebook, you might notice that one of the first steps in machine learning is to go from “raw data” into a set of “features” and “labels”. Extracting features from our data can sometimes be one of the trickier parts of the process and also one of the most important ones. We have to think carefully about exactly what the “right” features are for training our machine learning algorithm and, when possible, it is advantageous to find ways to reduce the total number of features we are trying to model. Once we define our features, we can build our model.

1.1 Working with data#

There is a common data set used to work with classification called the breast cancer data set. It is actually available in `sklearn` but rather than working with the dataset that's been cleaned up for us, let's continue to flex your data wrangling skills and look at the original data. You'll need to download two data files:

There is a common data set used to work with classification called the breast cancer data set. It is actually available in `sklearn` but rather than working with the dataset that's been cleaned up for us, let's continue to flex your data wrangling skills and look at the original data. You'll need to download two data files:

breast-cancer-wisconsin.databreast-cancer-wisconsin.names

The data are in “.data” and the “.names” describes that data.

You can download the files from here:

https://raw.githubusercontent.com/msu-cmse-courses/cmse202-supplemental-data/main/data/breast-cancer-wisconsin.data

https://raw.githubusercontent.com/msu-cmse-courses/cmse202-supplemental-data/main/data/breast-cancer-wisconsin.names

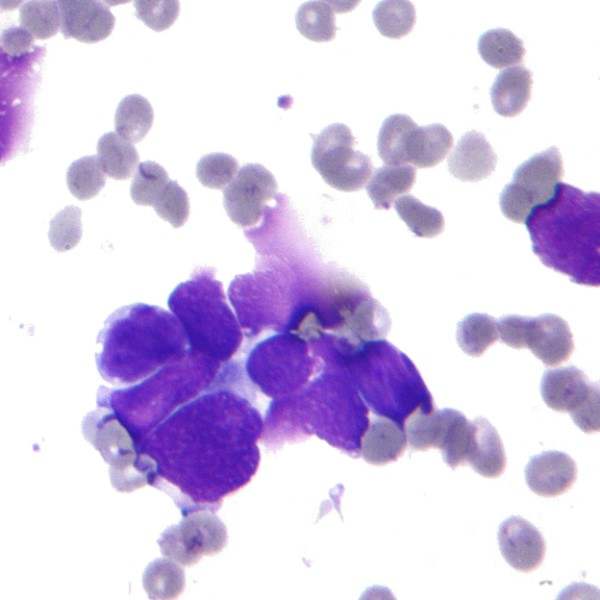

Each row of this data set is one patient case. The features are derived from fine‑needle aspiration, a minimally invasive biopsy technique used to sample cells from a suspicious lesion (e.g., a breast mass) for cytologic evaluation (microscopic evaluation of a sample on a slide). The features are subjective cytological descriptors scored on an integer scale, and the target is benign vs malignant.

✅ Do This: Read in the data, label the columns based on the .names file. Look at the dtypes, anything unusual? Why?

✎ What’s unusual about dtypes? Why?

# put your code here

✅ Do This: Can you identify what the problem is? If so, can you provide a DataFrame with just the rows that are causing the problem? There are lots of ways to do this so talk it out with your group. If you get stuck, talk with an instructor, so that you can move on to the next part of the assignment without spending too much time here.

# put your code here

As you hopefully discussed, we happen to have some rows with missing data values. So, as we’ve seen previously, we have an imputation problem.

✅ Do This: Write code to solve this missing data problem and say what you did.

By the way, there is an argument na_values that you can provide to read_csv that will mark a list of characters as if they were np.nan using na_values, which is pretty darn convenient. Using that will help when importing the data for classification.

# put your code here

1.2 : Splitting the dataset for model into training and testing sets#

Now that’s we’ve loaded up and cleaned up the data, let’s split the data in a training set and final testing set. We want to randomly select 75% of the data for training and 25% of the data for testing.

Also, you should turn the class_labels into 0 (currently 2, for benign) and 1 (currently 4, for malignant) as the classifier we are going to use (Logisitic Regression) predicts valuse between 0 and 1.

✅ Do This: You will need to come up with a way to split the data into separate training and testing sets (we will leave the validation set out for now). Make sure you keep the feature vectors and classes together.

BIG HINT: This is a very common step in machine learning, and there exists a function to do this for you in the sklearn library called train_test_split. From the documentation, you find that takes the features and class labels as input and returns

4 outputs:

2 feature sets (one for training and one for testing)

2 class labels sets (the corresponding one for training and for testing)

Use train_test_split to split your data into a training set and a testing set that correspond to 75% and 25% of your data respectively. To ensure that some of the provided code below will work, you should use the variable names train_vectors, test_vectors, train_labels, and test_labels to store your results. Check the length of the resulting output to make sure the splits follow what you expected.

Important Note: You’ll need to break up your dataframe into a set of labels and a set of features before you do the train-test splitting. You also want to make sure you “features” don’t include any columns that don’t make sense to use as features (i.e. avoid including columns that would not be expected to have any meaningful influence on the labels)

# Put your code here

## Here is some starter code to pull out the features and labels. You can modify this as needed and add the train test split code here as well.

# class_labels= df["label"].replace({2:0.0, 4:1.0}). # Pull out label column, replace "2" with 0 and "4" with 1

# features = df.drop(["label"], axis=1) # Remove the label column to get the feature vectors

# features = features.drop(["id"], axis=1). # Remove the id column since this unique subject/patient identifier is not a useful feature for classification

✅ Question: Why do we need to separate our samples into a training and testing set. Why can’t we just use all the data for both? Wouldn’t that make it work better?

✎ Do This - Erase the contents of this cell and replace it with your answer to the above question! (double-click on this text to edit this cell, and hit shift+enter to save the text)

2. Logistic Regression#

In the pre-class, you watched a video explaining some of the aspects of the logistic regression. The full details on logistic regression require deeper study, but we can gain some insight from looking at the function we are trying to fit to our data. Plot out the curve to the following equation, called the logistic function:

✅ Do This: Create a logistic function and then make the plot of \(f(x)\) for \(x\) over the range -6 to 6.

# put your code here

What is interesting about that curve is that all values of x are mapped into the range for y of 0.0-1.0. Assuming you have a binary classifier, that is one that only has two class labels, that is the mapping you want: all combination of features map into the two class labels 0, or 1. Moreover, the graph looks a lot like a cumulative probability distribution. The probability that a set of features is of class 1 is 1 to the right and 0 to the left. As you watched in the pre-class video, this is the basis for logistic regression.

It’s considered a regression because the “x” in our logisitic function is actually going to be a regression equation, such that

for as many terms as we like and the new logistic function

Logisitic classification tries to find the values for the parameters \(b_{i}\) that gives maximal performance on training, and hopefully testing. Let’s let statsmodels do that.

We are going to use all the training data from above and train a logistic regression. It is similar to what we did before with regular regression.

Note, very importantly, the use of sm.add_constant on the training vectors. We talked about that when we did OLS in statsmodels. That column of constant is what the \(b_{0}\) or intercept will train against. We need that column to get an intercept. (Note that this code requires that you used train_labels and train_vectors when you did the train-test split earlier)

logit_model = sm.Logit(train_labels, sm.add_constant(train_vectors))

result = logit_model.fit()

print(result.summary() )

The “Pseudo R-squ” is the equivalent (mostly) of the R-squared value in Linear regression that we looked for before. It ranges from 0 (poor fit) to 1 (perfect fit). The P values under “P > |z|” are measures of significance. The null hypothesis is that the restricted model (say a constant value for fn) performs better and a low p-value suggests that we can reject this hypothesis and prefer the full model over the null model (I.e., LOW \(P\)-VALUE IS GOOD). This is similar to the F-test for linear regression.

✅ Do This: Based on the results from above, remove the low-performing columns and then recreate the training and testing sets and run it again. Display the summary.

# put your code here

✅ Question: How do the fits of the full model and the reduced model compare? What evidence are you using to make compare these two fits?

✎ Do This - Erase the contents of this cell and replace it with your answer to the above question! (double-click on this text to edit this cell, and hit shift+enter to save the text)

2.2 What exactly is this model?#

The following code will display the final equation for the model you just fit. You should be able to see the coefficients that were learned with the logit_model.summary() command above and these are the coefficients in the equation. The equation is the same as the one we had for linear regression, except that it is being fed into the logistic function.

def logit_equation(result, prob_form=True):

"""

Build a string for the logistic regression equation from a statsmodels Logit result.

If prob_form is True: y = 1 / (1 + exp(-(b0 + ...)))

If prob_form is False: log(p/(1-p)) = b0 + ...

"""

params = result.params

if 'const' not in params.index:

raise ValueError("Model must include a constant term named 'const'.")

intercept = params['const']

coefs = params.drop('const')

terms = [f"{intercept:.2f}"]

for name, coef in coefs.items():

sign = " + " if coef >= 0 else " - "

terms.append(f"{sign}{abs(coef):.2f}*{name}")

linear_part = "".join(terms)

if prob_form:

eq = f"y = 1 / (1 + np.exp(-({linear_part})))"

else:

eq = f"log(p/(1-p)) = {linear_part}"

return eq

# Source: Perplexity. Accessed March 13, 2026 from https://www.perplexity.ai/. Prompt Chain: "Logistic regression equation helper for Wisconsin breast cancer dataset."

# You may need to edit the code below to match the name of your fitted model result variable

print(logit_equation(logit_result, prob_form=True))

✅ Do This: Confirm that the coefficients in the equation above match the coefficients in the summary above. How many unknown (free) parameters were in the model? In other words, how many coefficients were learned by the model?

2.3 How good is the model?#

There are a number of ways that we can check the performance of our model and we will continue new ways throughout the semester. The major difference in the standard statistics approach and supervised learning approaches is that we test our models using the data that we held out: “the testing data.”

That is, we will use our classifier model to make predictions from the test features and we can then compare those predictions to actual test labels. To test accuracy, we can use the output of the .fit() method of the model to predict how well the classifier works on the test data (the data it was not trained on). Conveniently that is the .predict() method and, again, we use it on the result of the .fit().

Note: The output from .predict() is not a 0/1 value as the test labels are, but rather a fraction between 0 and 1 indicating how likely each entry is to be one class or another. We can make the assumption that anything greater than 0.5 would be a 1 class and anything less than 0.5 would be a 0 class.

✅ Do This: do the following:

use the

.predict()method (look up the documentation as necessary) to create the predicted labels using the test inputconvert the output of the

.predict()method to the 0/1 class values of the test labelsprint the resulting predicted class values of the test labels

# put your code here

One of the first metrics we will use in determining how well a machine learning model is working is the “accuracy score”, which compares the predictions our model made for the test labels and the actual test labels. This score is one of many metrics we can use and is included in sklearn.metrics as (surprise) accuracy_score(). Here’s the documentation on accuracy_score.

✅ Do This: Using the predicted 0/1 labels you just created:

Use the

sklearn.metricswe imported at the top and run theaccuracy_scoreon the 0/1 predicted label and the test labels.Print your accuracy result

# put your code here

✅ Question: How well did your model predict the test class labels? Given what you learned in the pre-class assignment about false positives and false negatives, what other questions should we ask about the accuracy of our model?

✎ Do This - Erase the contents of this cell and replace it with your answer to the above question! (double-click on this text to edit this cell, and hit shift+enter to save the text)

Confusion matrix (Time Permitting)#

Display a graphical version of the confusion matrix that summarizes the true positives, true negatives, false positives, and false negatives for the predicted labels compared to the actual test labels. This is among the common way to evaluate the performance of a binary classification model. You can use sklearn.metrics.confusion_matrix for this. What do the values in the confusion matrix tell you about the performance of your model?

# put your code here

Congratulations, we’re done!#

Now, you just need to submit this assignment by uploading it to the course Desire2Learn web page for today’s submission folder (Don’t forget to add your names in the first cell).

© Copyright 2026, Department of Computational Mathematics, Science and Engineering at Michigan State University